What is Software-Defined Storage?

![]()

Software-defined storage (SDS) is a technology used in data storage management that intentionally separates the functions responsible for provisioning capacity, protecting data and controlling data placement from the physical hardware on which data is stored.

SDS allows storage hardware to be easily replaced, upgraded, and expanded without uprooting familiar operational procedures or discarding valuable software investments.

If you contrast modern SDS principles with hardware-bound designs that inextricably tie storage operations to a specific device or manufacturer, you’ll find that each make and model performs similar functions but implements them differently so as to render them mutually incompatible.

このような非互換性により、ハードウェアのマイナーな更新が、手間のかかるデータ移行によって遅れた大規模な運用のオーバーホールになり、結果としてコストのかかるストレージサイロが発生することになります。

In their most versatile form, SDS solutions hide proprietary hardware idiosyncrasies through a layer of virtualization software.Unlike hypervisors that make a single server appear to be many virtual machines, SDS combines diverse storage devices into centrally managed pools.

The scope of an SDS product may be confined to a small selection of hardware and a short list of functions, especially when offered by a hardware manufacturer consciously limiting where it can be used.

More flexible alternatives from independent software vendors, such as DataCore, support a variety of hardware choices and a rich set of data services.

Before SDS

Isolated Storage Capacity Across Unlike Storage Systems

After SDS

Without Software-Defined Storage

- Monolithic and siloed storage architecture

- Rigid, hardware-bound storage infrastructure

- Can run into vendor lock-in

- Storage refresh and data migration are tedious

- Premium devices are stressed with overload

- Add more hardware when low on capacity

With Software-Defined Storage

- 一元的にプールされた流動的なSoftware-Defined Storageアーキテクチャ

- Flexible and hardware-independent storage infrastructure (hardware is decoupled from storage software)

- Ultimate freedom to choose any storage vendor/model/type

- Non-disruptive storage refresh and data migration

- Balance capacity and load uniformly across diverse and unlike storage systems

- Optimize unused capacity intelligently and defer new hardware expenditure

The Authoritative Guide to Software-Defined Storage

Understand the power of SDS and why now is the time to implement it in your IT department

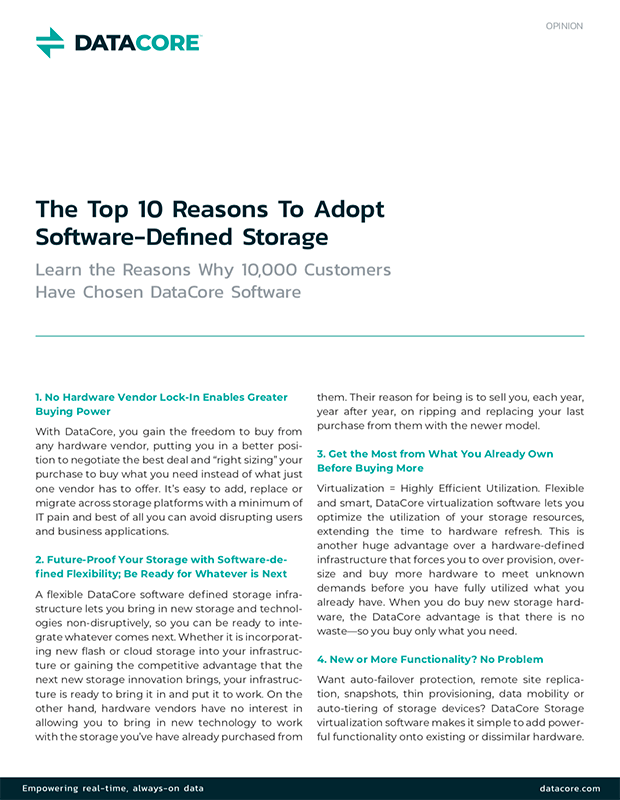

ソフトウェアディファインドストレージに転換する理由

今日の企業のほとんどは、次のようなストレージの大きな課題に直面しています。

- 高コスト

- 遅延の問題

- ストレージのダウンタイム

- 移行の複雑さ

- サイロの管理

- Exponential data growth

That’s exactly where storage virtualization through software-defined storage comes in. SDS is a mature, proven technology, benefitting IT organizations and services providers across verticals and use cases.

どのようなストレージハードウェアを所有しているかにかかわらず、ソフトウェアこそが、お客様のホスト、アプリケーション、エンドユーザー向けにストレージシステムを最適化するために提供可能な機能、特長、サービス、利点を決めるインテリジェンスの中心およびレイヤーとなります。

If you find yourself losing sleep at night due to existing SAN/NAS challenges, then it is time to evaluate software-defined storage solutions. Your organization cannot afford to continue depending on traditional storage and keep throwing hardware at the problem every time you run out of capacity. It is time to modernize your storage architecture and optimize your investments.

To the degree that your SDS product can remove device-specific dependencies and be re-configured for different deployment/topology models, the more readily you can adapt to new hardware innovations from competing suppliers.

また、自動化のレベルやポリシーに基づいたインテリジェンスも考慮する必要があります。これは、オンプレミスやクラウドにある様々なストレージシステムの階層で、負荷分散やホットデータ、ウォームデータ、コールドデータの分散を行う場合に特に重要です。

Find out how storage virtualization technology used in software-defined storage pays you back.

Central Storage Pooling

データセンター内の Pure、NetApp、Dell/EMC、IBM、または任意のSANやNASのアレイを組み合わせて、単一の仮想プールに統合したところを想像してください。

What if you could stripe your application data across all of them regardless of how fast or slow the devices may be? Well, you can with SDS.

Automatic Data Placement

SDSソリューションは、データがホット、ウォーム、またはコールドのいずれかであるかに基づいてデータを自動的に移動し、ホットデータ用のプレミアムストレージ、ウォームデータ用のセカンダリストレージ、コールド/アーカイブデータ用のオブジェクトストレージなどの最も適切なストレージデバイスに配置する機能も提供します。

Scale, Migrate, Refresh with Ease

Add, change, remove storage with ease using SDS. You are not bound by any choice of storage or budget constraint.

If you ever need to replace any of the expensive traditional storage gear, you will have the freedom to find more cost-effective alternatives.

Try software-defined storage solutionsfor your block, file and objectstorage environments today!

SDS Solutions from DataCore: Helping you Thrive from Diversity

With so many vendors promoting SDS products, you may find it hard to distinguish them. You will find DataCore an ideal fit for environments with a mixed collection of distributed storage equipment that operate in isolation – each running disparate functions trying to achieve similar availability and performance.

Our customers point to improved:

DataCoreのお客様は、ソフトウェアイノベーションによって既存のデバイスを交換することなく機能を拡張することで、サービスやサービスレベルの向上を実感しています。ソフトウェアのレイヤーがすべての魔法を起こす秘密の源です。DataCoreがソフトウェア定義ストレージのトップ企業の1社と考えられている理由は、当社のプラットフォームが、従来のNAS(ネットワーク接続型ストレージ)やSAN(ストレージエリアネットワーク)機器に対して、比べものにならないような優位性を提供しているためです。ソフトウェアをハードウェアから分離することがお客様に勝利をもたらします。答えは簡単です。

Key Advantages of DataCore Software-Defined Storage

SDSを使用したストレージリソース管理には、その規模に関わらずどの組織にとっても、簡単さ、

効率、コスト削減といった点で明確なメリットがあります。

- Get the most out of existing storage investments and reduce CAPEX on new purchases

- Gain the ultimate flexibility to integrate new technologies with existing equipment – no vendor lock-in

- Make your storage infrastructure more efficient with intelligent, policy-driven and automated data placement

- Simplify control and management of storage provisioning and capacity allocation from a central control plane – eliminate storage silos

Understand the key drivers and use cases for the increased adoption of software-defined storage by IT organizations.

DataCore SDS Takes You Further and Beyond

DataCore SDS solutions are extensible and agile, enabling data access via known and/or published interfaces (APIs or standard file, block, or object interfaces) that can extend the capabilities of SDS to integrate with existing and new technologies.

Ultimately, data matters the most to the application, end-user, and business. While virtualized and optimized data storage helps increase storage efficiency, out-of-the-box data services offered by DataCore SDS solutions take you further in your pursuit to maximize the value of your current and future IT investments.

Learn More About our SDS Solution

お客様の組織でSoftware-Defined Storageの導入をご検討される場合は、SANsymphonyの詳細をご覧ください。

We have been doing this for a very long time—20 years to be exact. We are considered the SDS authority, and we have the most mature platform in the market.