Benefits

DataCore Software Defined Storage (SDS) takes advantage of unique capabilities of Intel® Optane™ DC SSDs with targeted integration into either existing storage or in setting up a new infrastructure. DataCore SDS has a rich feature set and makes unmatched speed of Intel Optane SSDs instantly available to business applications and enterprise data.

Key Advantages:

Maximize the value of

your existing investments

Automate any data placement according to your business needs

Modernize your datacenter without disruption

Achieve even more than with All-Flash-Arrays alone

Performance Dilemma

Today, the relevance and amount of data is permanently increasing and there is no obvious solution to the problem. Therefore, it is mandatory for IT departments to provide a good user experience with direct responding applications and fast access to data. Ensure that data is not just “sitting there” but delivering valuable business contribution.

When neglecting any randomly occurring network bandwidth problems, the slowest element to access data or to run applications fast, is where the data is stored: the storage system.

But here, Solid State Drives (SSD) provide a fast alternative to classic Hard Disk Drives (HDD). The prevalent technology used for SSDs is the so-called Flash, more precisely NAND Flash, technology. It has developed over decades and many vendors offer All-Flash-Arrays (AFA) which solely use Flash based SSDs.

In relation to HDD based storage, AFAs promise to be much faster, having the same price while consuming less space and energy. Yet, even AFAs are struggling to keep up with ever-growing data.

Facts About NAND Based Flash

-

Flash reads are fast

-

Price per TB of Flash is declining, but still more expensive than a TB of HDD

-

Flash has limited endurance

-

Flash writes can get unexpectedly slow

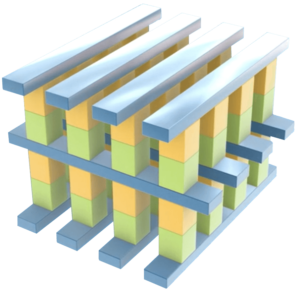

Intel® Optane™, the Most Significant Storage Advancement in Decades

Key Characteristics:

- New storage technology

- Not Flash based

- Byte addressable – like DRAM

- Available in SSD form factor

Key Advantages:

Symmetric Speed

Regardless of read or write – always maximum speed

Performance Under Load

More performance compared to enterprise class Flash

High Endurance

Up to 20x longer lifespan compared to enterprise class Flash

Low Latency & QoS

For read and write without any sudden performance drops

Optane™ and Flash Comparison in a Sansymphony Environment

To capture the performance differences between Flash based SSDs and Intel Optane SSDs, both technologies were used in the same SANsymphony environment. Each installation was measured with the same load profile at different read/write levels: coming from read only going to write only.

The results are shown with the two key performance parameters for storage: I/Os Per Second (IOPS) and Latency in milliseconds.

Results

- Optane clearly demonstrates performance advantage over Flash

- Optane provides consistent high performance – independent of the load, while Flash gets slower with any additional write

- Already with 10% writes and 90% reads, Optane is obviously much faster than Flash

- No need for NVMe oF to leverage the performance benefits of Optane when integrated with SANsymphony – the results were achieved with traditional Fibre Chanel (FC) connections to the load generator server

*Comparison details:

The performance fingerprint is a series of performance tests run with IOmeter against a given storage unit as part of a DataCore SANsymphony storage pool. The test itself consists of 11 individual performance profiles. Starting from 100% read, 0% write to 0% read, 100% write in 10% increments at 8 Kilobytes IO size, 100% random, 0% sequential.

The 100% random IO is chosen to simulate the worst-case situation for any kind of caching architecture. This should reveal the raw device performance of the given equipment and also the IO coalescing benefits of the DataCore layer sitting in front of the storage system. The whole series of tests is repeated 10 times to create enough data for analysis. The data is compiled in average values. The test uses 4 workers with 16 outstanding IOs per worker at 4 virtual test devices with one worker each.

Flash: 4x Intel® SSD DC P4510 Series (1.0TB, 2.5in PCIe 3 TLC Flash), 1 DWPD / 1.920 TBW

Optane: 4x Intel® Optane™ SSD P4800X Series (375GB, 1/2 Height PCIe x4, 20nm, 3D XPoint™), 30 DWPD / 20.500 TBW

The compared drives were built into a DataCore server (non-mirrored):

2x Intel(R) Xeon(R) CPU E5-2637 v3 @ 3.50GHz (4 Cores / 8 Threads), 128GB RAM (83GB SANsymphony Cache), Windows Server 2016, 5x Fibre Channel 16Gbit/s

The load was generated by a separate server which was also the point of measurement (IOmeter):

2x Intel(R) Xeon(R) CPU E5-2637 v3 @ 3.50GHz (4 Cores / 8 Threads), 128GB RAM, Windows Server 2016, 5x Fibre Channel 16Gbit/s in MPIO Round-Robin

Do more with less – 10/90 rule and what it means for you

Various examinations show that in almost every data center the minority of all data causes the majority of all I/Os. The average relation we measured in the past years for our customers is that just 10% of their data were responsible for 90% of all I/Os.

This explains the background of the 10/90 rule. Of course, the detailed numbers are different from environment to environment.

They could be 5/90 or 10/95 or 14/88 and so on. But the important message remains constant: Having fast storage media for only a minor portion of the data is fully sufficient for excellent user experience in terms of storage and application responsiveness.

Unfortunately, during the lifespan of data their relevance changes constantly. So, what are the hot 10 or whatever % of your data that should be stored on the fastest storage media? With DataCore SDS the answer is: it is automatically detected and placed on the appropriate storage media according to its relevance.

This is a dynamic process that continuously happens in the background. It takes all the current usage patterns of each data set into account, leverages ML and AI technology, and moves the data to the storage media based on their relevance. This ongoing process is fully transparent to the users and applications.

They access their data assets in their familiar way, regardless where they are physically stored. This functionally across devices and systems from different vendors is called Auto-Tiering and Auto-Placement.

Read more in the related blog here.

Integration Flexibility

DataCore SDS enables the purposeful integration with existing as well as the setup of new storage environments – in both ways enabling non-disruptive integration of Intel Optane to be leveraged by all your applications. Additionally, all deployments can be combined and changed as desired – you determine the best option for you.

Key Integration Questions Answered

What would you like to improve?

How will you integrate

fast storage media?

What are the total costs?

How to migrate data to the fast storage media?

How to ensure all my data/storage services for new storage?

What happens later

and when is “later”?