As leaders in software defined storage, we always have an eye on the market. Many companies are bringing new, compelling, business-relevant innovations to market, and that translates into exciting opportunities to drive business and IT transformation. Let’s take a look at the top storage trends so far in 2020 to see which innovations translate into opportunities for you.

Artificial Intelligence (AI) in storage

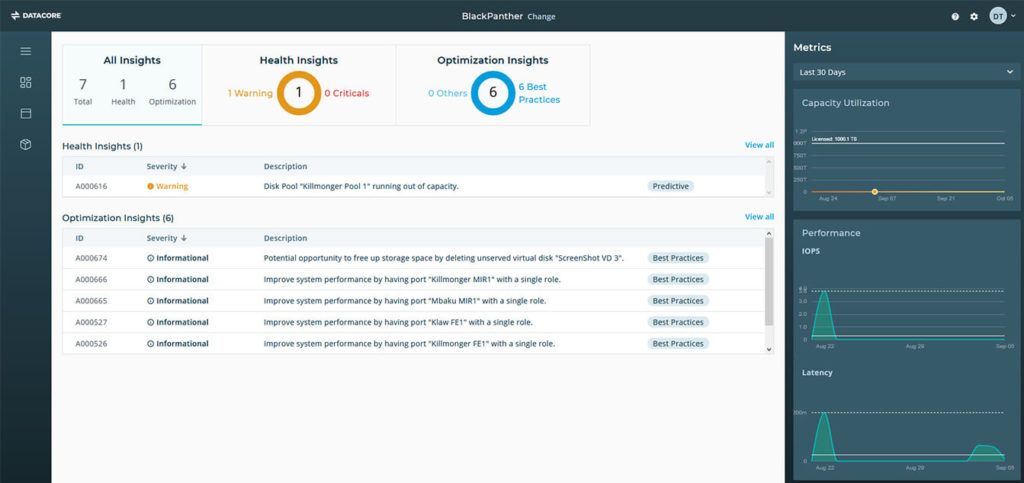

Artificial Intelligence (AI) will play an increasingly important role in storage because, to put it simply, human intervention is becoming an obstacle to success. AI helps to automate data placement by evaluating resources, reassessing models, and executing fuzzy business objectives around criticality, responsiveness and relevance without human intervention. In addition, AI-driven analytics can deliver insights from many massive data sets with greater efficiency, giving organizations predictive insights that don’t require human engagement.

In the world of software-defined storage, AI is already being used to analyze performance and space utilization in order to help administrators make informed decisions about adding capacity, solving bottlenecks, and decommissioning old storage systems. We’ll see more situations in which AI-driven analytics improve storage efficiency, resilience, and cost effectiveness, so more software-defined storage vendors will include it.

Smarter Storage through AI

As many organizations continue to expand their efforts into predictive, AI-driven data analytics, they’ll have a need for new kinds of storage services. Too many storage platforms lack the scale, performance, cloud connectivity, and ability to handle both intensive and archival requirements. You’ll see vendors drive software-defined storage that provides an expansive and unified storage platform that silently tunes itself through AI, adapting effortlessly to handle both unstructured and archival data sets from the same interface.

Greater resiliency from hybrid multi-cloud storage

Cloud storage has become almost synonymous with elastic capacity. Today organizations have data stored in multiple clouds, private clouds, and on-premises infrastructure. We feel as though this will be a year where organizations work to herd all those cats as they move inactive unstructured data to the cloud as storage costs decline. There’s a need for software-defined storage that spans multiple public clouds, private clouds, and on-premises infrastructure. Vendors who can span the public/private divide will have an advantage.

The old primary/secondary distinction is getting fuzzy

As data grows and workload multiply, managing the old primary storage vs. secondary storage approach becomes challenging. Problems of stranded capacity, software incompatibility from one platform to another, and simply less clarity about data placement start driving up costs and complexity while hurting performance and resilience.

Some vendors forecast an all-flash future down the road, where everything sits on high-performance storage. Other vendors insist that hard drives aren’t dead and will always have a place. But everyone agrees on one thing that emerging platforms blur the lines between primary and secondary in order to optimize efficiency and value. With this grows the need to do away with manual distinction and segregation of what data goes where. Organizations will start relying more on machine learning intelligence from software-defined tools to automate decision-making on data placement.

Hyperconverged Constraints More Visible

Hyperconverged infrastructure (HCI) has had a good ride, but enough generations of it have passed for organizations to see its limits.

HCI is essentially a one-size-fits-some solution – imagine running Big Data analytics on it and you can begin to see the challenges. It also ends up being overprovisioned or under-provisioned because storage, compute, and network performance have to scale together, regardless of whether the workloads need more compute or more storage.

We will likely see organizations begin to shift toward modifications of HCI, like disaggregated HCI (dHCI), where storage and compute can scale independently. Others will think about building the right storage infrastructures for each workload, and many of those will be software-defined.

Exploiting Metadata for Practical Use

Once upon a time, storage was about blocks of data, and those blocks resided on disks. Storage trends have changed. Today, the value of data is increasingly governed by its relevance—and relevance is derived from metadata. Metadata empowers organizations to build policy-driven and even AI-driven data management that will, among other things, protect certain files at all costs, automatically move some data sets to the lowest cost storage platforms, and even accelerate performance by keeping some files on the fastest storage. Metadata-driven data management will become a disruptive force in 2020 in the unstructured data storage world, and by 2022 be seen as an essential capability for an organization of any size.

We’ll see unprecedented performance gains and possibly a new storage tier

We have had a few years of all-flash storage and have gotten familiar with the advantages (and limitations) of flash SAS drives in storage, whether hardware or software defined. But this year we’ll see a significant shift toward a new tier of performance (do we call it Tier 0 or Tier 1?) Leading-edge organizations will start adopting NVMe drives and even NVMe-over Fabrics (IP or Fibre Channel) as a way to accelerate time to results and time to insight for their most demanding workloads.

Vendors will tout Storage Class Memory (SCM) as the be all and end all of storage performance optimization. Regardless, this new shift will start opening up new possibilities for service innovation but will also put new demands on networks and compute infrastructure. Software-defined storage (SDS) platforms should be well positioned to take advantage of new drives and network protocols.

NAS consolidation will also accelerate

How many organizations have dozens of storage servers, filers, unified SAN/NAS platforms, that are older than they should be, offer lower performance than they could, and force organizations to make compromises due to cost and complexity? Software-defined storage vendors have touted their abilities to aggregate them under a storage virtualization layer which abstracts the file shares from the underlying hardware. That capability will become more critical than ever before as organizations seek to move files between different classes of storage as they age. A single software-defined management layer makes that shift easy, and some software-defined storage vendors offer automated migration as well.

This is the year for software-defined storage

Can you tell we have an opinion about software-defined storage? As a market leader, we see all the advantages of it – from features like automated migration, built-in AI, the ability to assimilate and pool existing capacity; advantages that drive down time, cost, and risk for organizations of all sizes. The old days of adding proprietary block, file, and object storage platforms are beginning to fade. Smart organizations know that agility and versatility come from software, and software-defined storage unlocks possibilities other approaches just can’t. That’s why reports suggest the global software-defined storage market could be worth as much as $7 billion in 2020.

At DataCore, we have become the authority on software-defined storage by helping over 10,000 customers around the world cost-effectively modernize their storage infrastructures to take advantage of new technologies without disruption. We are here to eliminate hardware and vendor lock-in, giving IT departments maximum flexibility. With DataCore, you can have storage that’s smarter, highly efficient, and always available. Schedule a personalized demo with our solution architects to see our software-defined storage solutions in action, and stay tuned to see our predictions for storage trends in 2021!